Raspberry Pi

IoT & Edge

Healthcare

SaaS Application

Law Enforcement

SaaS Application

Research & Development

SaaS Application

SaaS | SDKs

SaaS Applications

Defense

Intelligence

Robotics

OS & Infra

Defense

OS & Infra

Private AI Server

On Premises

Raspberry Pi

IoT & Edge

Healthcare

SaaS Application

Law Enforcement

SaaS Application

Research & Development

SaaS Application

SaaS | SDKs

SaaS Applications

Defense

Intelligence

Robotics

OS & Infra

Defense

OS & Infra

Private AI Server

On Premises

The cloud shares our context and information for decision making with systems outside our control.

Offline Intelligence is built for regulated industries with absolute confidentiality. All sensitive information is protected, & compliant with the strictest regulations.

Defense

Air-gapped

Field deployable

Legal

Attorney-client privilege

Document Intelligence

Healthcare

HIPAA compliant

Clinical AI

OFFLINE INTELLIGENCE

THE INFRASTRUCTURE LAYER

Private

Infrastructure

Persistent memory

Hot · Warm · Vault

Any hardware

x86 · ARM · GPU · Edge

Multiple languages

Py · Rust · JS · C++ · Java

Open source

Apache 2.0 · v0.1.5

"A model that's 75% as capable but runs on a denied, disrupted, intermittent tactical edge beats GPT-5 and its equivalents in a data center."

Memory that compounds

Every session adds to a permanent, queryable knowledge asset. The longer your organization uses Offline Intelligence, the more valuable it becomes. Unlike cloud AI, where knowledge resets when the session ends, our memory architecture accumulates institutional knowledge that belongs to you.

Memory Index

Your Organization's Accumulated Knowledge

0

Knowledge accumulated

0

Sessions processed

0

Documents analyzed

0

Bytes transmitted externally

This system has accumulated 2,847 facts across 156 sessions on-device

Common Questions

Frequently Asked Questions

We believe AI sovereignty is a fundamental right. The most important industries in the world, including defense, healthcare, law, and critical infrastructure, have been locked out of the AI revolution because every major AI system was built on the same flawed assumption: that your data must leave your infrastructure to be processed.

Our mission is to dismantle that assumption. We build the infrastructure layer that brings enterprise-grade AI permanently inside your walls, so that powerful AI and complete privacy are never a tradeoff again.

We are pre-seed with working prototypes and MVPs across multiple product lines, available today.

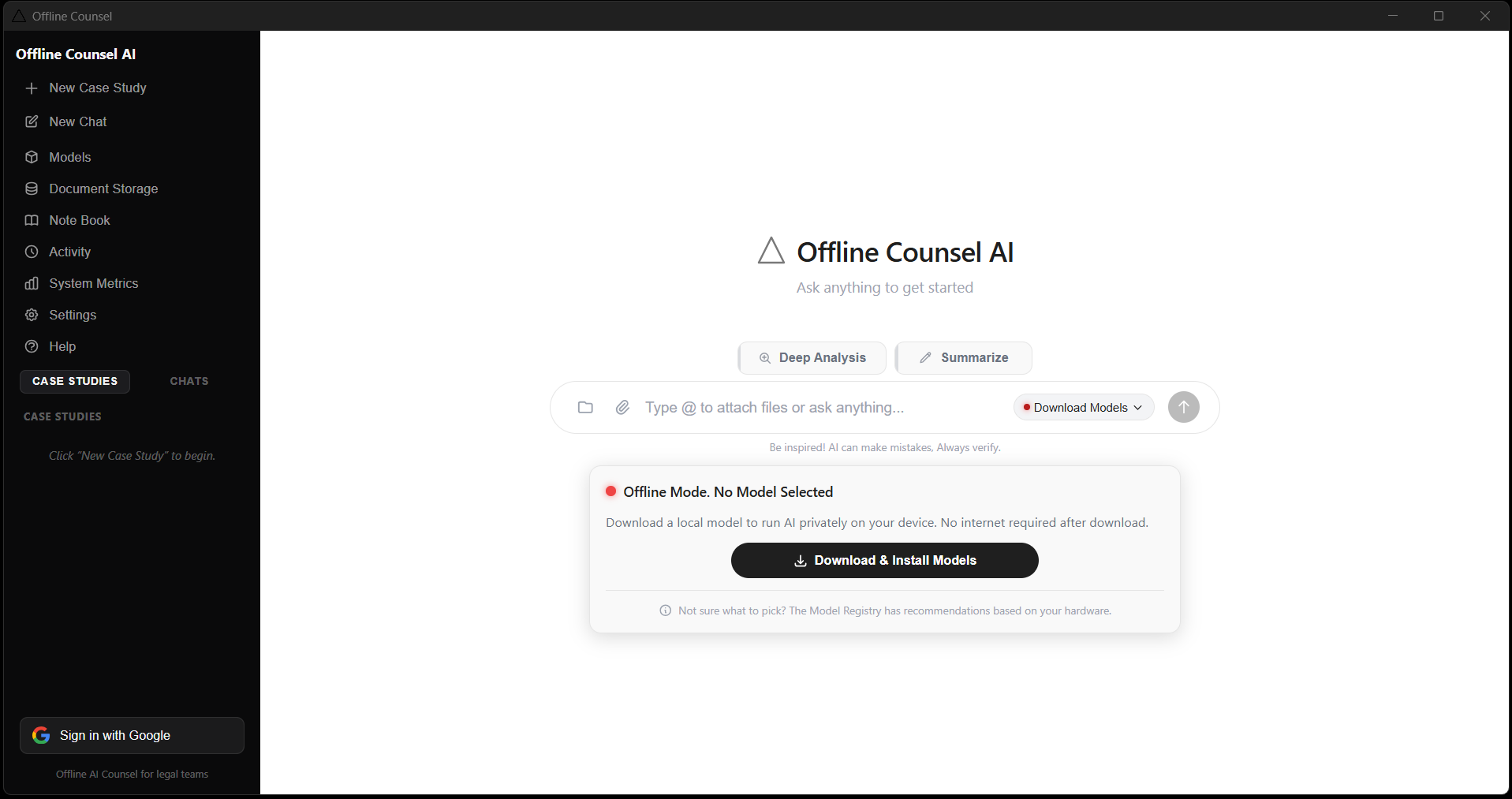

Our Chat Interface runs locally on Windows and lets users run Llama 4, DeepSeek, Mistral, Qwen, and other open models entirely on their own hardware, free forever. Our CLI, Terminal, and CLAW coding agent are in active development as free, open source tools for developer teams. Offline Counsel for legal teams and Offline Health for clinical environments are built and ready for paid pilot deployments.

The foundation of every product is our Rust-based inference runtime: stateful, hardware-agnostic, and built from the ground up for air gapped and disconnected environments. We are opening SaaS pilots with legal and healthcare organizations now, while releasing our developer tools publicly to build community and establish the open source ecosystem.

Three revenue tiers built for three audiences, each reinforcing the next.

Free and open source developer tools: our Chat Interface, CLI, CLAW agent, and SDKs are free forever. They build community, drive adoption, and establish us as the default infrastructure for private AI development. This is our distribution engine, not a cost center.

Paid SaaS for legal and healthcare: Offline Counsel and Offline Health are subscription products with on-premise and managed deployment options configured for compliance requirements. These pilots generate revenue and, more importantly, produce the documented track record that credentializes our next step.

Custom edge solutions for defense and large enterprises: air gapped field deployments, enterprise memory infrastructure, and custom model configurations priced on contract. Getting a defense contract requires a proven vendor. Legal and healthcare pilots are how we become one.

We are building toward a fundamentally different AI architecture for the world, one that is distributed, sovereign, and efficient rather than centralized, dependent, and energy-intensive.

Today's cloud AI model requires massive data centers, constant connectivity, and the continuous transfer of sensitive data across networks. Our infrastructure replaces that with intelligent local processing, on the device, inside the building, within the trust boundary. The result is dramatically lower latency, zero data exposure, and a fraction of the energy footprint of cloud alternatives.

At scale, this means a distributed intelligence network where every hospital, every factory, every autonomous system, and every edge device operates with full AI capability, independently, privately, and without depending on infrastructure they do not own or control. That is what AI sovereignty looks like at a civilizational level.

We offer a complete stack across three layers, designed to meet you wherever you are in your AI journey.

For end users and enterprises, we offer ready-to-use applications that bring private AI into your workflows immediately, no technical setup required, no data leaving your environment.

For developers and engineering teams, we offer native SDKs and bindings across all major programming languages, so any team can embed our inference and memory management capabilities directly into their own systems and applications.

For enterprises with specific compliance or deployment requirements, we offer private deployment solutions configured for your infrastructure, your regulatory environment, and your security standards.

Every product in our ecosystem shares one property: zero external dependencies. They work offline, stay private, and run entirely on hardware you own and control.

Privacy in our system is not a policy or a setting. It is an architectural property that cannot be switched off, overridden by a vendor, or compromised by a breach of infrastructure you do not control.

Every component of the inference pipeline, from model loading to memory storage, executes entirely within your hardware environment. There are no external API calls. No telemetry. No data transmission of any kind. We have run our systems under full network packet capture for extended periods and produced zero egress to confirm this.

For regulated industries, this means HIPAA, FINRA, CMMC, and GDPR compliance is a structural guarantee, not a contractual promise from a third party. You own the hardware. You own the model. You own the data. We provide the infrastructure layer that makes it all work together.

The difference is architectural, not cosmetic.

Cloud AI services process your data on infrastructure owned and operated by a third party. Your queries, your documents, your context, and your outputs all travel across networks you do not control and are processed in environments you cannot audit. For consumer applications, this is an acceptable tradeoff. For regulated industries, it is often a legal disqualifier.

Our AI runtimes are stateful and self-contained. The model runs on your hardware. The memory system uses a tiered Hot, Warm, and Detail Vault architecture that persists conversation context and learned patterns locally in SQLite, so your AI sessions accumulate intelligence over time without any of that state ever leaving your environment.

Beyond privacy, local inference eliminates the latency of network round-trips, removes dependency on internet connectivity, and gives you complete control over model versioning, update cycles, and data retention. You are not renting intelligence from a vendor. You are running it yourself, on infrastructure you own.

Any organization where data leaving the building is not an option.

In the near term, our solutions are purpose-built for regulated industries: healthcare organizations that must maintain HIPAA compliance, financial institutions under FINRA and SEC jurisdiction, defense contractors requiring CMMC certification and air gapped deployments, and law firms protecting the confidentiality of their clients.

For developers and engineering teams, our open source SDK provides a production-ready inference and memory management layer for any application that requires local AI, from embedded systems to enterprise software.

For IoT and robotics teams, our hardware aware architecture enables full AI capability on edge devices, disconnected field deployments, and autonomous systems where cloud dependency is either impractical or prohibited.

And for any individual or organization that simply believes their data should belong to them, our tools provide enterprise-grade AI without the surveillance model that cloud services depend on.

We are building infrastructure. The range of who benefits from it expands every time a new vertical realizes that AI sovereignty is not optional for them. It is the only viable path forward.

Compliance in regulated industries is not achieved by adding privacy features to a cloud system. It requires a fundamentally different architecture, one where the compliance guarantee is structural, not contractual.

Our infrastructure is designed so that all data processing occurs within your certified environment. For healthcare deployments, this means patient data never leaves your network, satisfying the core technical requirement of HIPAA's Security Rule. For defense deployments, our systems can be configured for full air gap operation with FIPS 140-2 cryptographic compliance. For financial services, our complete audit logging and zero-egress architecture supports FINRA and SEC data handling requirements.

We do not claim certifications we have not earned. Our architecture is designed from the ground up to support the path to certification in each of these frameworks. We work with enterprise customers to document their deployment architecture for compliance review, and our technical specifications are available for security audit on request.